Nvidia’s days of absolute dominance in AI could be numbered because of this key performance benchmark

Rodrigo Liang, CEO and co-founder of SambaNova Systems

It’s difficult to chip away at a headstart that is essentially trillions of dollars strong, but that’s what Nvidia’s competitors are attempting. They may have the best chance of competing in a type of artificial intelligence computing called inference.

Inference is the production stage of AI computing. Once training a model is done, inference chips produce the outputs and complete tasks based on that training — whether that’s generating a picture or written answers to a prompt.

Rodrigo Liang cofounded SambaNova Systems in 2017 with the aim of going after Nvidia’s already obvious lead. But back then, the artificial intelligence ecosystem was even younger, and inference loads were tiny. With foundation models advancing in size and accuracy, the flip from training machine learning models to using them is coming into view.

Last month, Nvidia CFO Colleen Kress said the company’s data center workloads had reached 40% inference. Liang told B-17 he expects 90% of AI computing workloads will be in inference in the not-too-distant future.

That’s why several startups are charging aggressively into the inference market — emphasizing where they might outperform the goliath in the space.

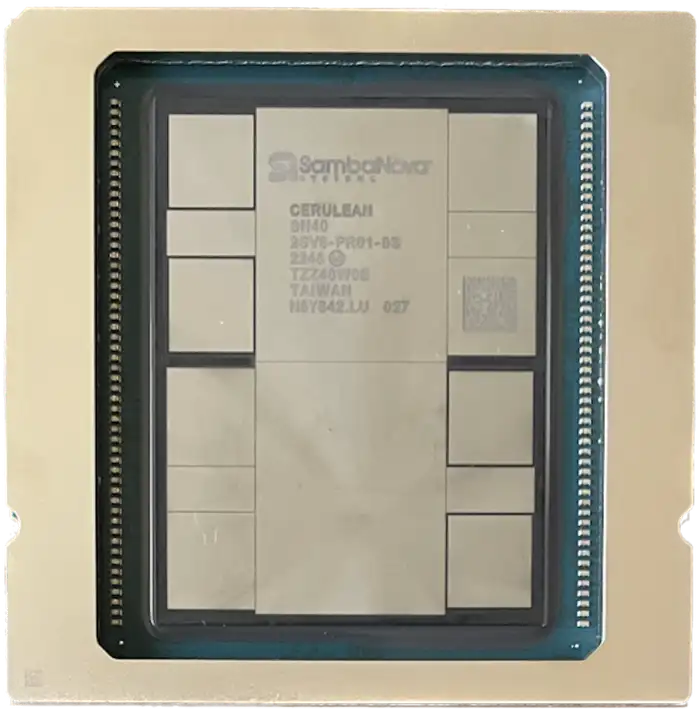

SambaNova uses a reconfigurable dataflow unit or RDU instead of Nvidia’s and AMD’s graphics processing units. Liang’s firm purports that its architecture is a better fit for machine learning models since it was designed for that purpose, and not for rendering graphics. It’s an argument that fellow Nvidia challenger Cerebras CEO Andrew Feldman references too.

Nvidia also agrees that inference is the larger market, according to Bernstein analysts who met with Nvidia CFO Colette Kress last week.

Kress believes Nvidia’s “offering is the best for inferencing given their networking strength, the liquid cooling offering, and their ARM CPU, all of which are essential for optimal inferencing,” the analysts wrote. Kress also noted that most of Nvidia’s inference revenue currently comes from recommender engines and search. Nvidia declined to comment for this report.

Liang said the inference market will begin to mature within roughly six months.

SambaNova uses a different architecture for it’s chip than Nvidia or AMD.

Startups are betting on computing speed

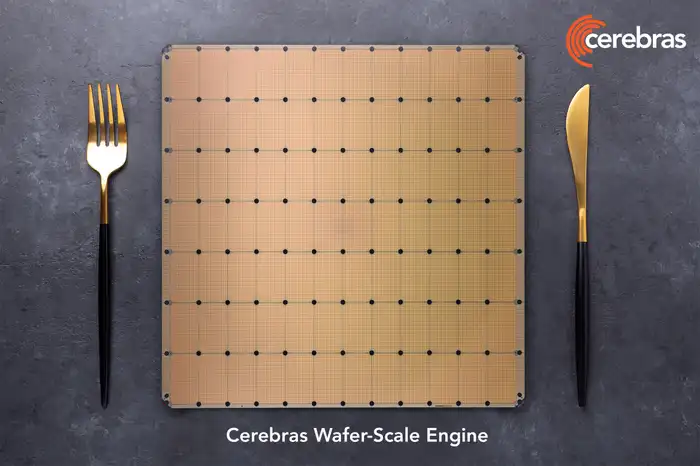

To pull customers away from Nvidia, newer players like Groq, Cerebras, and SambaNova are touting speed. In fact, Cerebras and SambaNova claim to offer the fastest inference computing in the world. And neither use GPUs, the type of chip leaders Nvidia and AMD promote.

According to SambaNova, its RDUs are ideal for agentic AI, which can complete functions without much instruction. Speed is an important factor when multiple AI models talk to each other and waiting for an answer can dampen the magic of generative AI.

But there isn’t just one measure of inference speed. The specifications of each model, such as Meta’s Llama, Anthropic’s Claude, or OpenAI’s o1, determine how fast results are generated.

Speed in AI computing results from several engineering factors that go beyond the chip itself. And the way chips are networked together can impact their performance, which means Nvidia chips in one data center may perform differently than the same chip in another data center.

The number of tokens per second that can be consumed (when a prompt goes in) and generated (when a response comes out) is a common metric for AI computing speed. Tokens are a base unit of data, where data could be pixels, words, audio, and beyond. But tokens per second don’t account for latency or lag — which can stem from multiple factors.

It is also difficult to make an apples-to-apples comparison between hardware as performance depends on how the hardware is set up and the software that runs it. Additionally, the models themselves are improving constantly.

In the hopes of advancing the market for inference faster, and edge their way into a market dominated by Nvidia, several newer hardware companies are trying different business models to bypass direct competition with Nvidia and go straight to the companies building AI.

SambaNova offers Meta’s open-source Llama foundation model through its cloud service and Cerebras and Groq have launched similar services. Thus, these companies are competing with both chip design companies like Nvidia and AI foundation model companies like OpenAI.

Artificialanalysis.ai provides public information comparing models that offer inference-as-a-service via API. On Wednesday, the site showed that Cerebras, SambaNova, and Groq were indeed the three fastest APIs for Met’a’s Llama 3.1 70B and 8B models.

Nvidia isn’t included in this comparison because the company doesn’t provide inference-as-a-service. MLPerf shares inference performance benchmarks for hardware computing speed. Nvidia is among the best performing in this dataset, but its startup competitors are not included.

“I think you’re gonna see this inference game open up for all these other alternatives in a much, much broader way than the pre-training market opened up,” Liang said. “Because it was so concentrated with very few players, Jensen could personally negotiate those deals in a way that, for startups, it’s hard to break up,” he continued.

Cerebras’s AI chip is roughly size of a dinner plate.

What’s the catch?

Semianalysis chief analyst Dylan Patel said chip buyers have to consider not just performance, but also all the advantages and expenses that make a difference over a chip’s lifetime. From his view, these startup chips can start to show cracks.

Patel told B-17 that “GPUs offer superior total cost of ownership per token.”

SambaNova and Cerebras disagree with this.

“There is typically a tradeoff when it comes to speed and cost. Higher inference speed can mean a larger hardware footprint, which in turn demands higher costs,” Liang said, adding that SambaNova makes up for this tradeoff by delivering speed and capacity with fewer chips and, therefore, lower costs.

Cerebras CEO Andrew Feldman disputed the view that GPUs have a lower total cost of ownership, saying “While GPU manufacturers may claim leadership in TCO, this is not a function of technology but rather the big bull horn they have,” he said.