OpenAI whistleblower found dead by apparent suicide

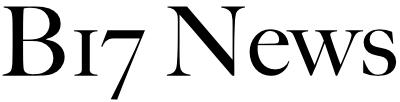

Suchir Balaji, 26, was an OpenAI researcher of four years. He left the company in August and accused his employer of violating copyright law.

Suchir Balaji, a former OpenAI researcher of four years, was found dead in his San Francisco apartment on November 26, according to multiple reports. He was 26.

Balaji had recently criticized OpenAI over how the startup collects data from the internet to train its AI models. One of his jobs at OpenAI was to gather information for the development of the company’s powerful GPT-4 AI model.

A spokesperson for the San Francisco Police Department told B-17 that “no evidence of foul play was found during the initial investigation.”

David Serrano Sewell, executive director of the city’s office of chief medical examiner, told the San Jose Mercury News, “the manner of death has been determined to be suicide.” A spokesperson for the city’s medical examiner’s office did not immediately respond to a request for comment from B-17.

“We are devastated to learn of this incredibly sad news today and our hearts go out to Suchir’s loved ones during this difficult time,” an OpenAI spokesperson said in a statement to B-17.

In October, Balaji published an essay on his personal website that raised questions about what is considered “fair use” and whether it can apply to the training data OpenAI used for its highly popular ChatGPT model.

“While generative models rarely produce outputs that are substantially similar to any of their training inputs, the process of training a generative model involves making copies of copyrighted data,” Balaji wrote. “If these copies are unauthorized, this could potentially be considered copyright infringement, depending on whether or not the specific use of the model qualifies as ‘fair use.’ Because fair use is determined on a case-by-case basis, no broad statement can be made about when generative AI qualifies for fair use.”

Balaji said in his personal essay that training AI models with a mass of data copied from the internet for free potentially damages online knowledge communities.

He cited a research paper that described the example of Stack Overflow, a coding Q&A website that saw big declines in traffic and user engagement after ChatGPT and AI models such as GPT-4 came out.

Large language models and chatbots answer user questions directly, so there’s less need for people to go to the original sources for answers now.

In the case of Stack Overflow, chatbots and LLMs are answering coding questions, so fewer people visit Stack Overflow to ask the community for help. This means the coding website generates less new human content.

Elon Musk has warned about this, calling the phenomenon “Death by LLM.”

OpenAI faces multiple lawsuits that accuse the company of copyright infringement.

The New York Times sued OpenAI last year, accusing the start up and Microsoft of “unlawful use of The Times’s work to create artificial intelligence products that compete with it.”

In an interview with Times that was published in October, Balaji said chatbots like ChatGPT are stripping away the commercial value of people’s work and services.

“This is not a sustainable model for the internet ecosystem as a whole,” he told the publication.

In a statement to the Times about Balaji’s accusations, OpenAI said: “We build our A.I. models using publicly available data, in a manner protected by fair use and related principles, and supported by longstanding and widely accepted legal precedents. We view this principle as fair to creators, necessary for innovators, and critical for US competitiveness.”

Balaji was later named in the Times’ lawsuit against OpenAI as a “custodian” or an individual who holds relevant documents for the case, according to a letter filed on November 18 that was viewed by B-17.